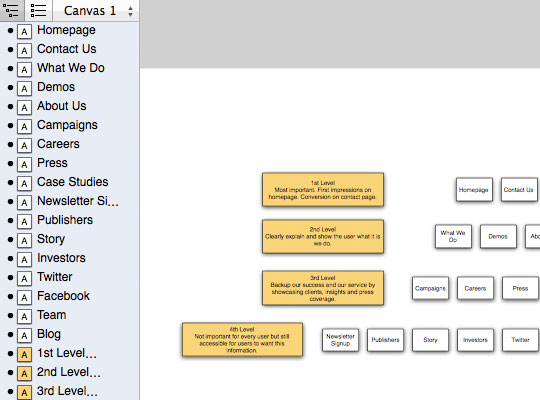

One of these diagrams is shown here as an example of what the output looks like for 2 levels. Often multiple diagrams are needed to organise the site information in a digestible way. The diagrams can get a bit dense and hard to read with more than 3 levels, which can be overcome by running each folder and subdirectory separately - generating a series of diagrams to represent the full site. For this particular website the pages surpassed 300k and there were an enormous amount of folders and subdirectories. The outputs are fairly good for graphviz standards it’s known for it’s pared down diagrams. I use Screaming Frog SEO, despite the weird name (great SEO!), I find it has the best functionality and price for what it does, including generating useful reports about site depth and link structure.Īfter collecting and loading the urls, the script splits the folders and tokenizes them to generate levels in the graphviz diagrams. There are a few site mappers on the market, e.g. For such a big crawl I prefer to use a software crawler to manage any unforeseen stops or starts and to make intermittent saves.

You can create a script in python to do the crawling using a library such as beautiful soup (as the tutorial above does) but I’ve found that on Australian ‘broadband’, with a mediocre computer and a website with more than 100k pages that can take at least 12 hours, usually more. For a really large site this is going to take a bit of time. Fortunately, websites this size can be mapped programmatically following this tutorial on generating sitemap diagrams with Python and graphviz. The downside is that it’s an old script that hasn’t been updated for a while and it can’t handle a website with pages in the 100,000’s. The benefit was the ability to customise the sitemap layout inside Omnigraffle, allowing the creation of nicer looking diagrams. Although it took a couple of minutes after set up to run the script, that method worked okay. Between 2015–2016 I was working on a site with pages in the tens of thousands and next I’ll be working for an institution with a web site with pages numbering hundreds of thousands.įor the ‘tens of thousands’ site - I used Omnigraffle and an apple script ( available here) to generate sitemaps. In 2014 I was working on a web app with screen states numbering in the hundreds - which was starting to hit the limit of manual sitemap creation. This is mostly due to company size but might also reflect progress in data transfer and storage. The size of the websites/software I’ve worked on has grown exponentially over the years. However when the size of the platform reaches around 100 screens, manual sitemap creation starts to get untenable and a programatic solution is needed. Spending a few hours creating these diagrams manually in a program like Omnigraffle is fine for a smaller site, and going through a site manually is useful for familiarising yourself with it deeply.

Great - but what happens when the site is too large to do this manually? They can be used to communicate the site content and structure to others, and to inform and act as a reference for changes to the information architecture, navigation, site structure or the UI. For an existing platform, understanding the current structure is one of the first steps a User Experience Designer working on a software project should take - you need to understand the content or functionality you’re going to be working with as well as who the users are.Ī good way of doing this is by creating sitemaps or flow charts - sitemaps also contribute to UX Design processes beyond initial research. Software designers generally work on two types of projects: redesigning existing products or creating new ones from scratch. Generating sitemap diagrams for massive websites

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed